Vision-and-Language Navigation (VLN) stands as a key research problem of Embodied AI, aiming at enabling

agents to navigate in unseen environments following linguistic instructions. In this field, generalization

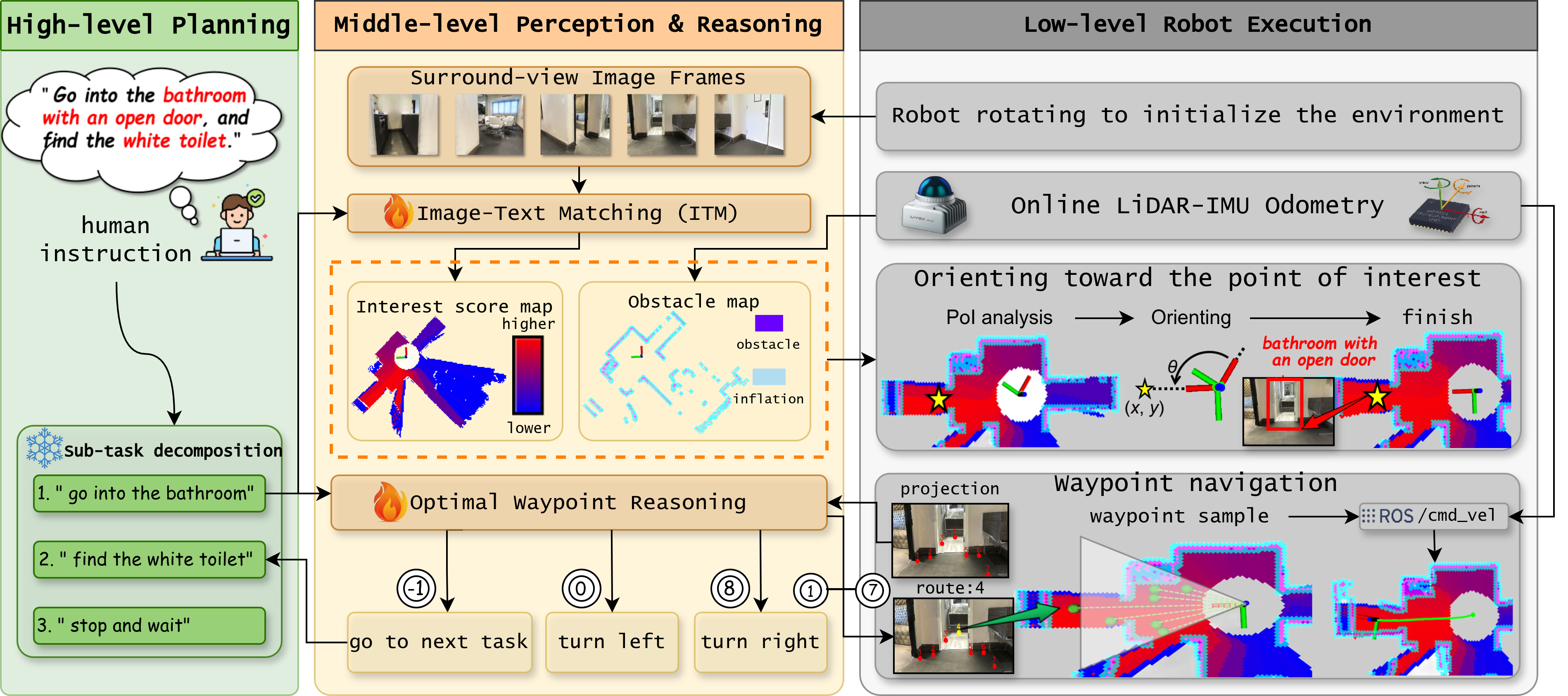

is a long-standing challenge, either to out-of-distribution scenes or from Sim to Real. In this paper, we

propose NaVid, a video-based large vision language model (VLM), to mitigate such a generalization gap.

NaVid makes the first endeavour to showcase the capability of VLMs to achieve state-of-the-art level

navigation performance without any maps, odometer and depth inputs. Following human instruction, NaVid

only requires an on-the-fly video stream from a monocular RGB camera equipped on the robot to output the

next-step action. Our formulation mimics how humans navigate and naturally gets rid of the problems

introduced by odometer noises, and the Sim2Real gaps from map or depth inputs. Moreover, our video-based

approach can effectively encode the historical observations of robots as spatio-temporal contexts for

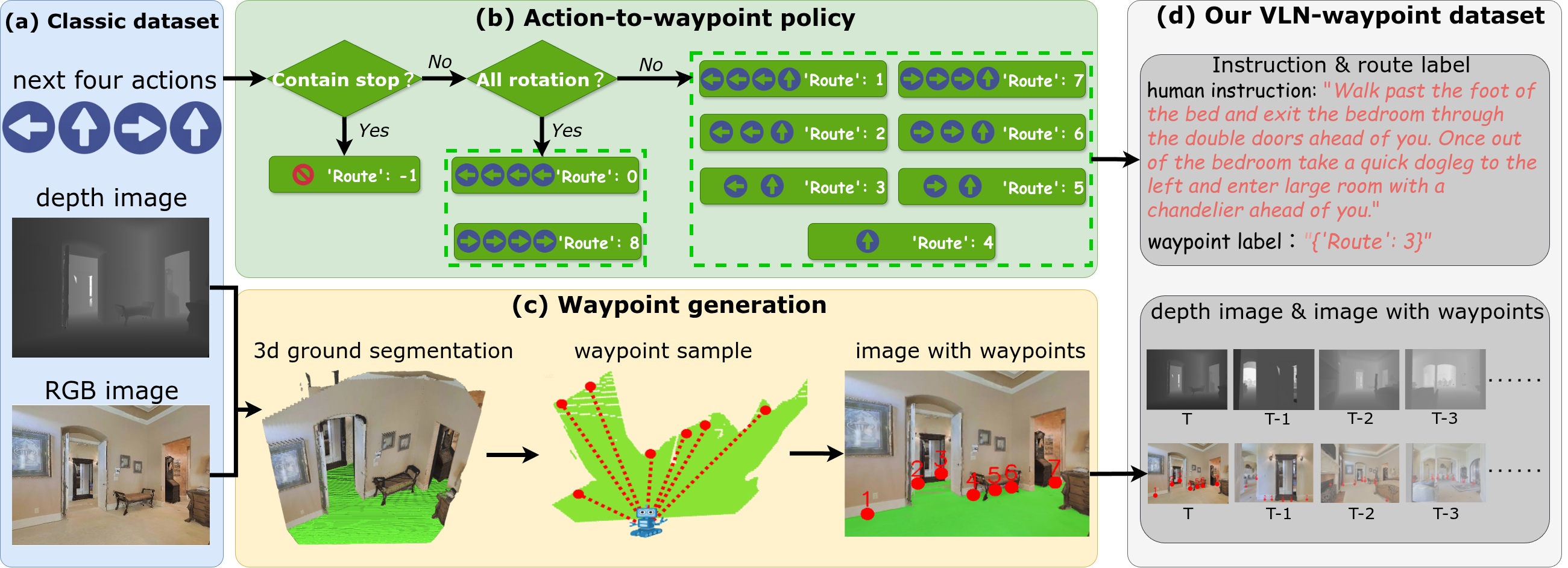

decision making and instruction following. We train NaVid with 510k navigation samples collected from

VLN-CE trajectories, including action planning and instruction-reasoning samples, along with 763k

large-scale web data. Extensive experiments show that NaVid achieves SOTA performance in simulation

environments and the real world, demonstrating superior cross-dataset and Sim2Real transfer. We thus

believe our proposed VLM approach plans the next step for not only the navigation agents but also this

research field. We will release the code and data to benefit the community.